Facebook is introducing new tools in an effort to put an end to the sharing of fake news on its platform. This comes after the social media giant was scrutinised in its role of allowing the spread of false information during the 2016 U.S Presidential Election.

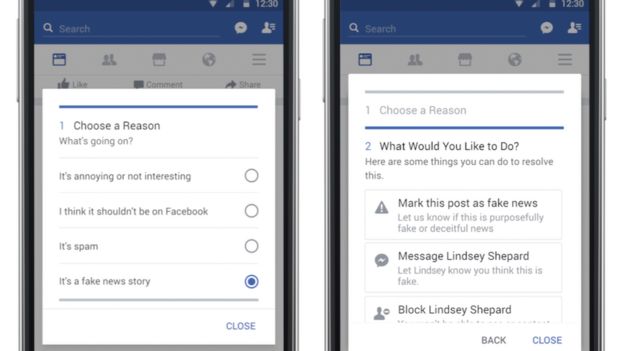

New changes to Facebook’s algorithm will provide more regulations on advertising and will allow readers to flag content as a “fake news story”. They will work together with fact-checking organisations such as FactCheck.org and Politifact to help identify false content.

Users who share fake news will be alerted that the article’s accuracy has been “disputed”. In addition, fake news publishers will lose financial incentives by being cut off from purchasing ad space as the majority of false content is “financially motivated”.

In their announcement, Facebook wanted to emphasise they weren’t going to impede on their user’s right to freedom of speech.

“We believe in giving people a voice and that we cannot become arbiters of truth ourselves, so we’re approaching this problem carefully.”

Along with the network’s vice president of product management, Adam Mosseri, confirming that people will still be able to share stories that have been flagged as fake on their timeline, “…because we believe in giving people a voice.”

Facebook’s response to fake news comes after a survey found that 25 per cent of Americans admitted to sharing fake news. 16 per cent said they couldn’t tell the difference or had difficulty in distinguishing fact from fiction. The potential consequences of sharing fake news concerned 64 per cent of those surveyed.

The new features have begun rolling out in the U.S and are expected to change the way news is shared on the social network platform.